Apparent versus Absolute Magnitude

May 15, 2021

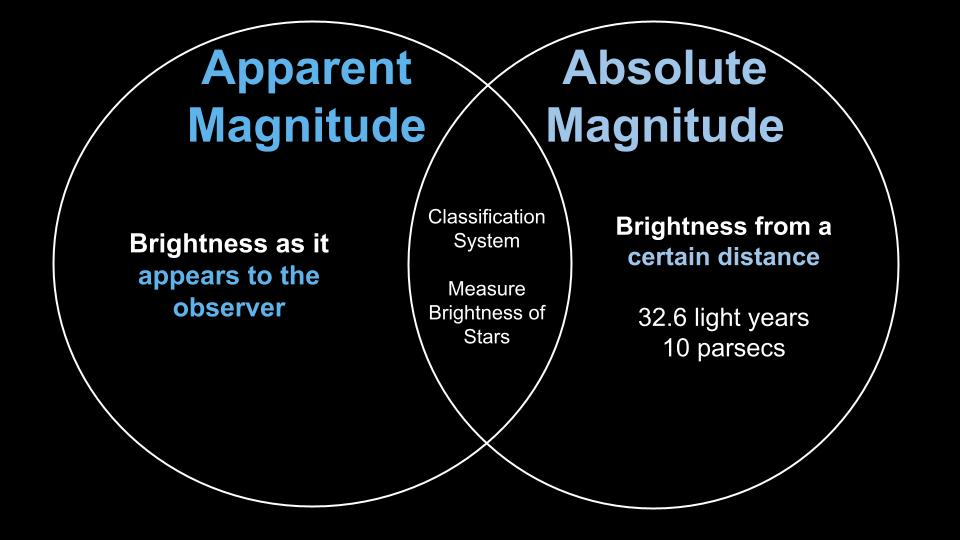

Stellar Magnitude is the measure of how bright a star is. There are two ways in which scientists measure brightness: apparent magnitude and absolute magnitude.

Watch and learn the differences and similarities between the apparent magnitude and absolute magnitude of stars.

Apparent magnitude is the brightness of a star as it appears to the observer. This is what stargazers observe when they look at the sky and see that some stars are brighter than others.

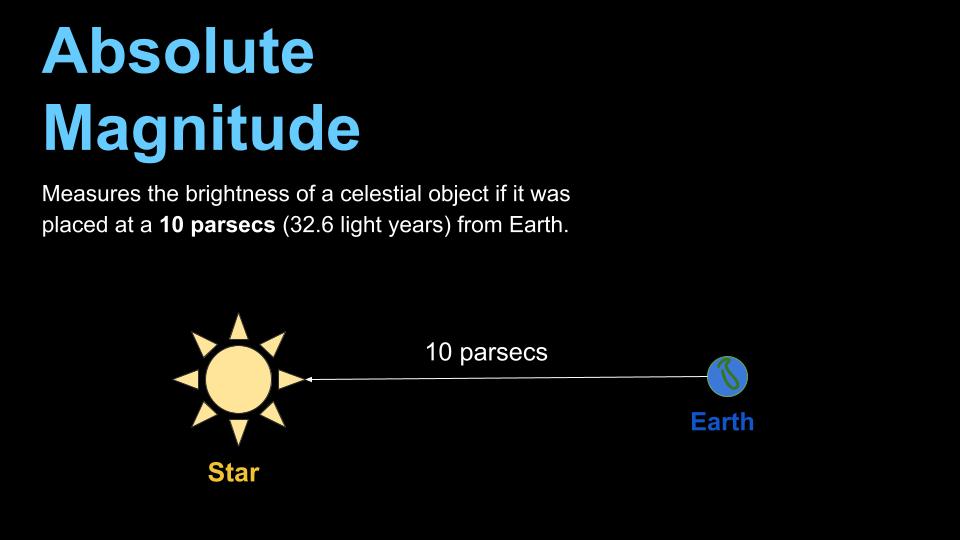

Absolute magnitude is the brightness of a star from a distance of 10 parsecs away. A parsec is equal to 3.26 light-years.

Each object in the sky has a designated apparent and absolute magnitude value. For example, Sirius, the brightest star in the night sky, has an apparent magnitude of -1.46 and an absolute magnitude of +1.42. Sirius is bright to observers because it is close by at only eight light-years away.

Now let's compare Sirius with another star. Antares is the alpha star of the constellation Scorpius. It has an apparent magnitude that ranges between 0.6 and 1.6 magnitudes. It has a range because it is a variable star, which means its brightness changes over time. The absolute magnitude of Antares is -5.9. This tells us that Antares is much bigger and brighter than Sirius.

Both the apparent magnitude and absolute magnitude are useful when analyzing the brightness of a star.